Word Embedding Ì´Ëž€

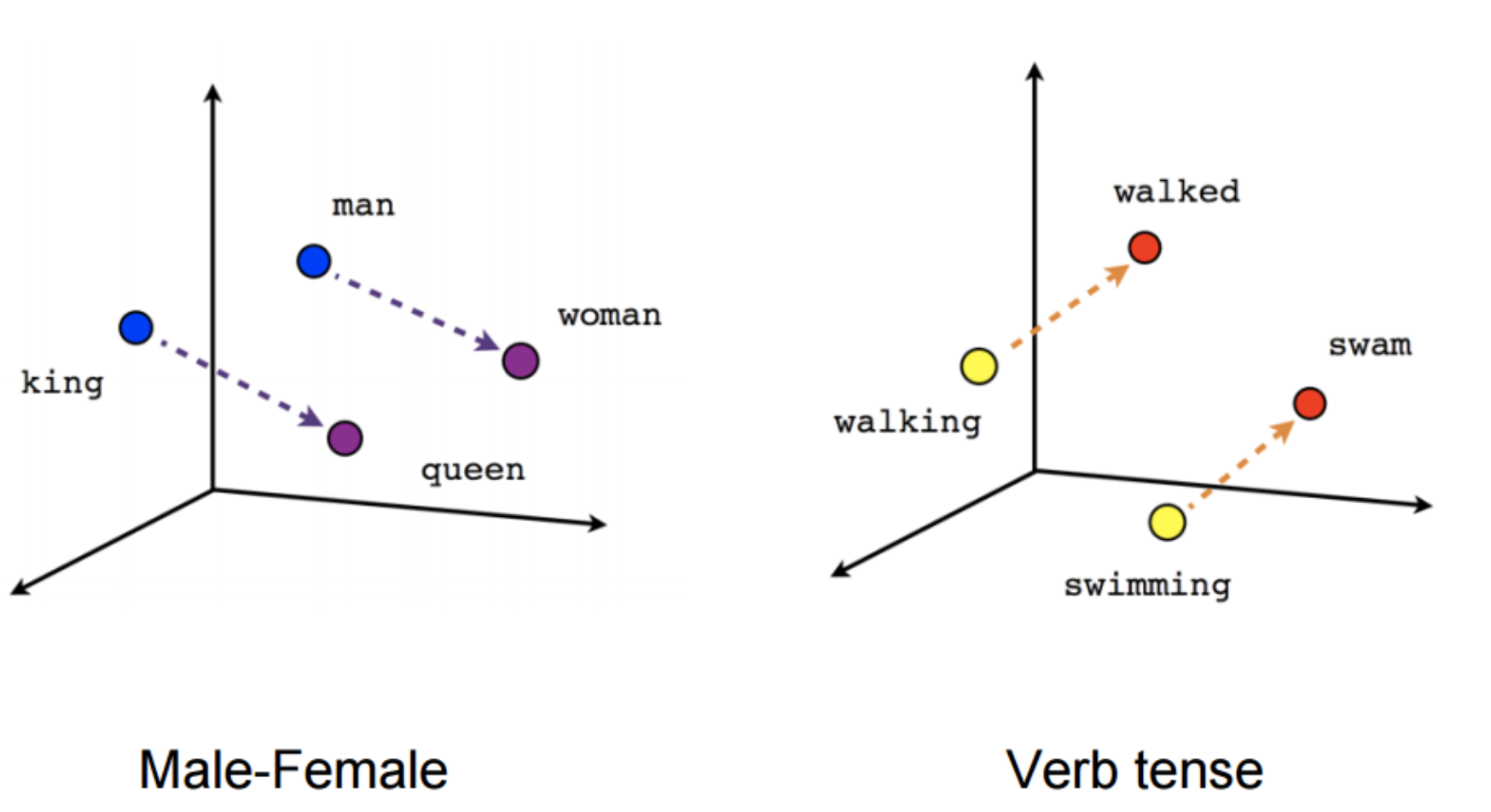

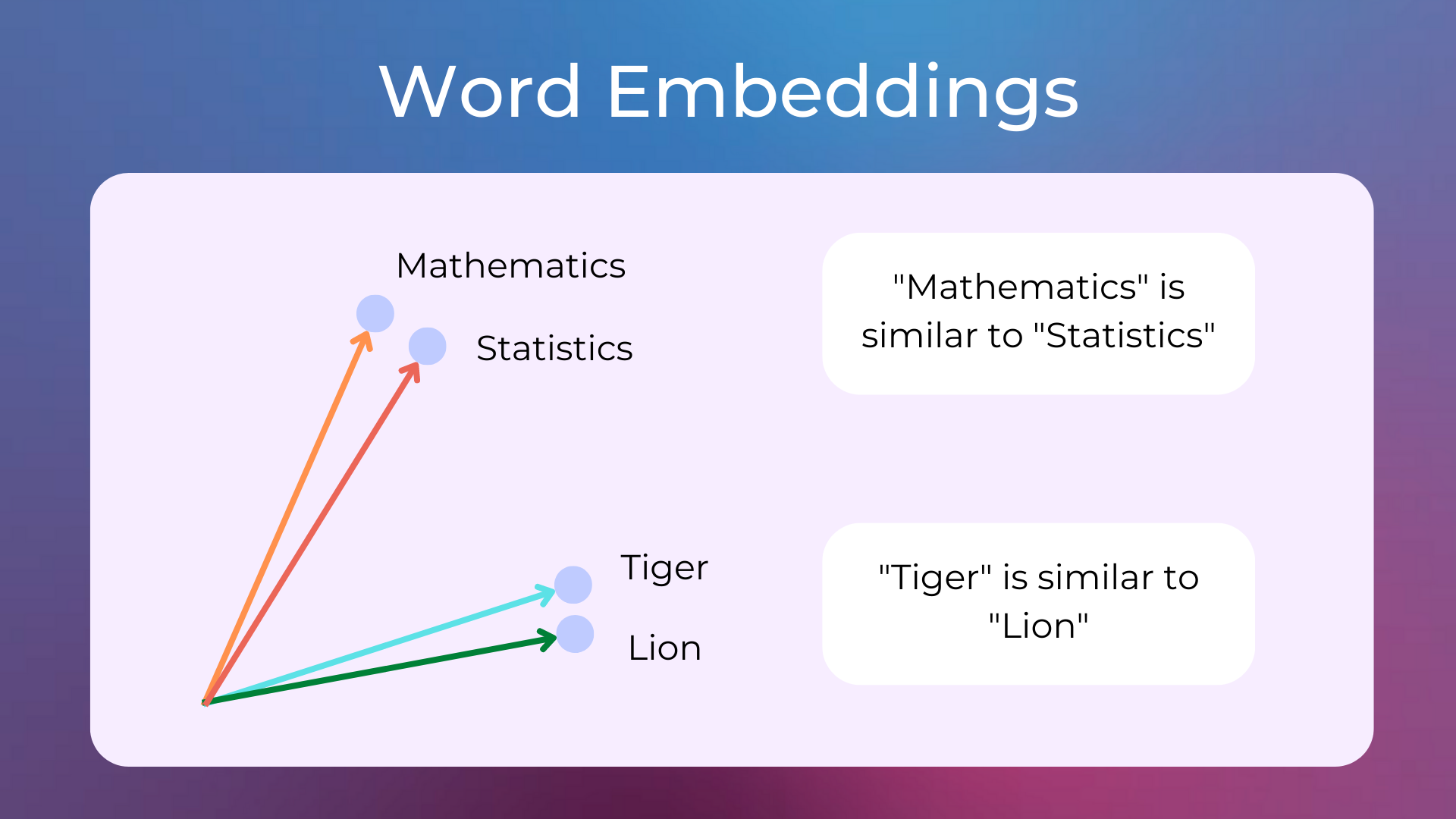

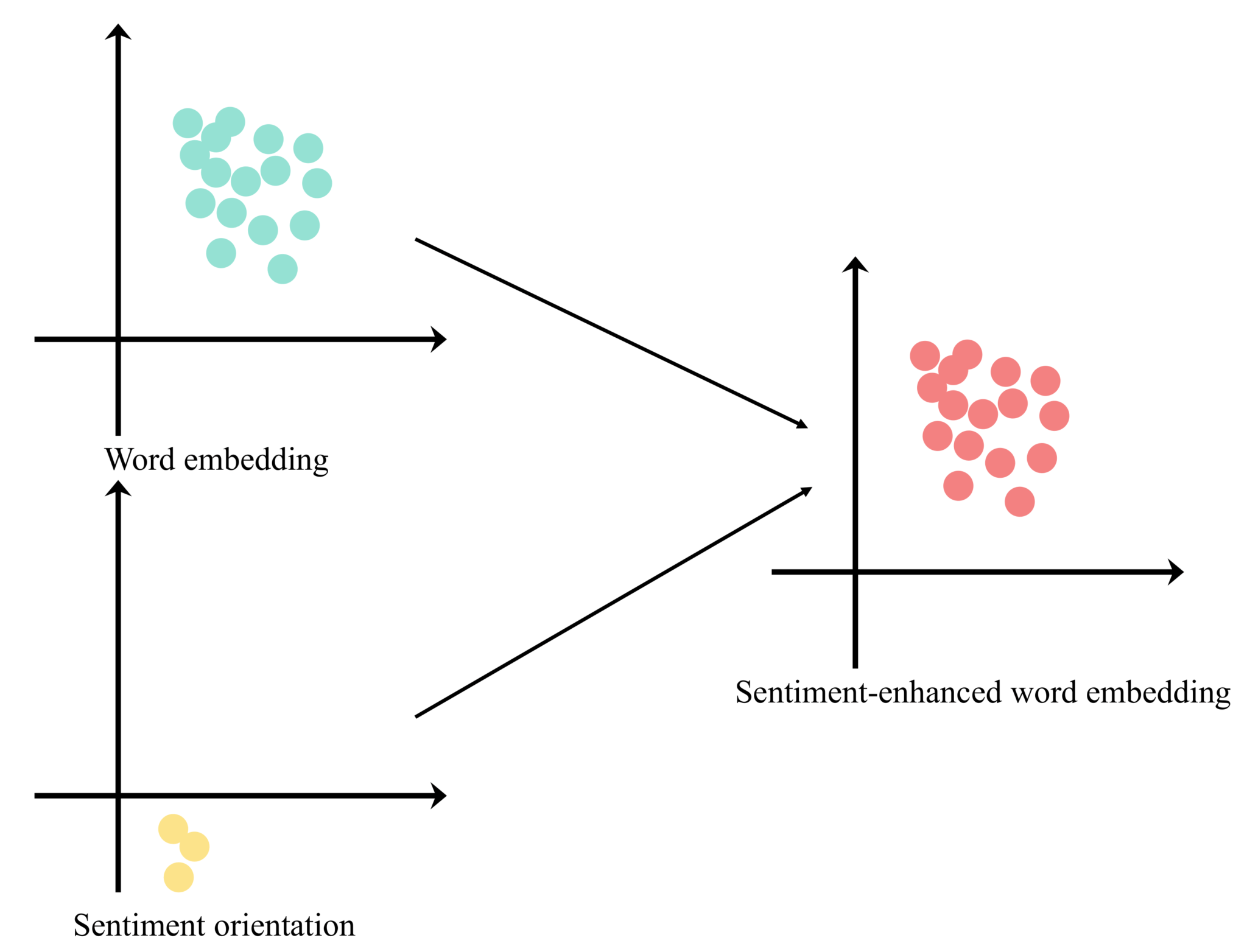

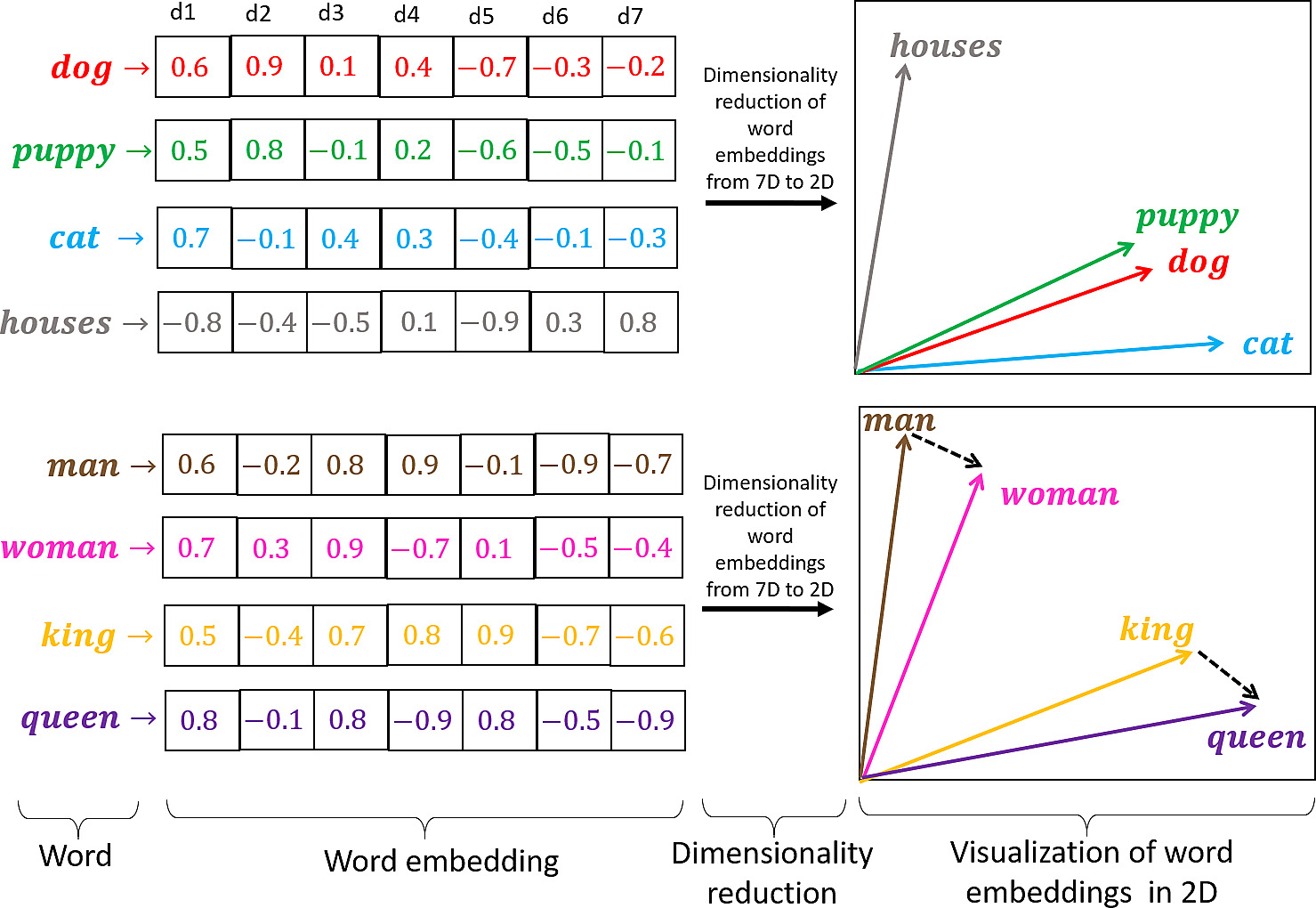

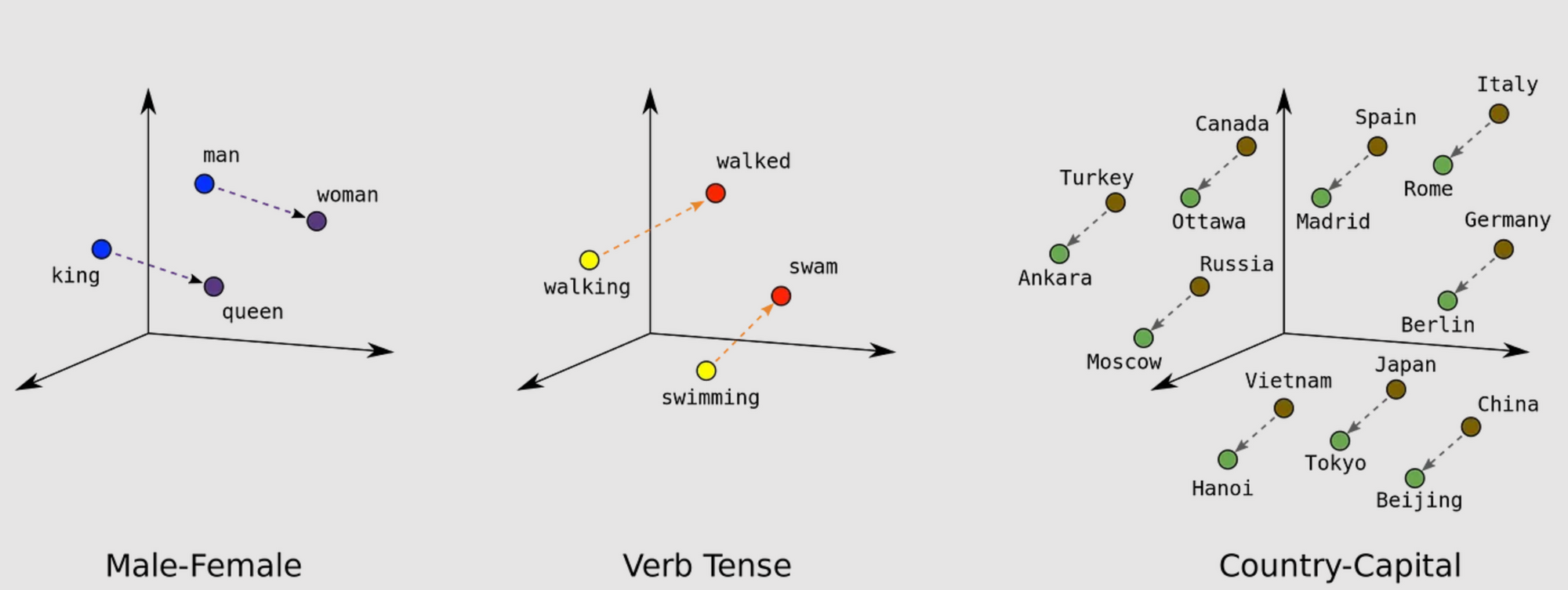

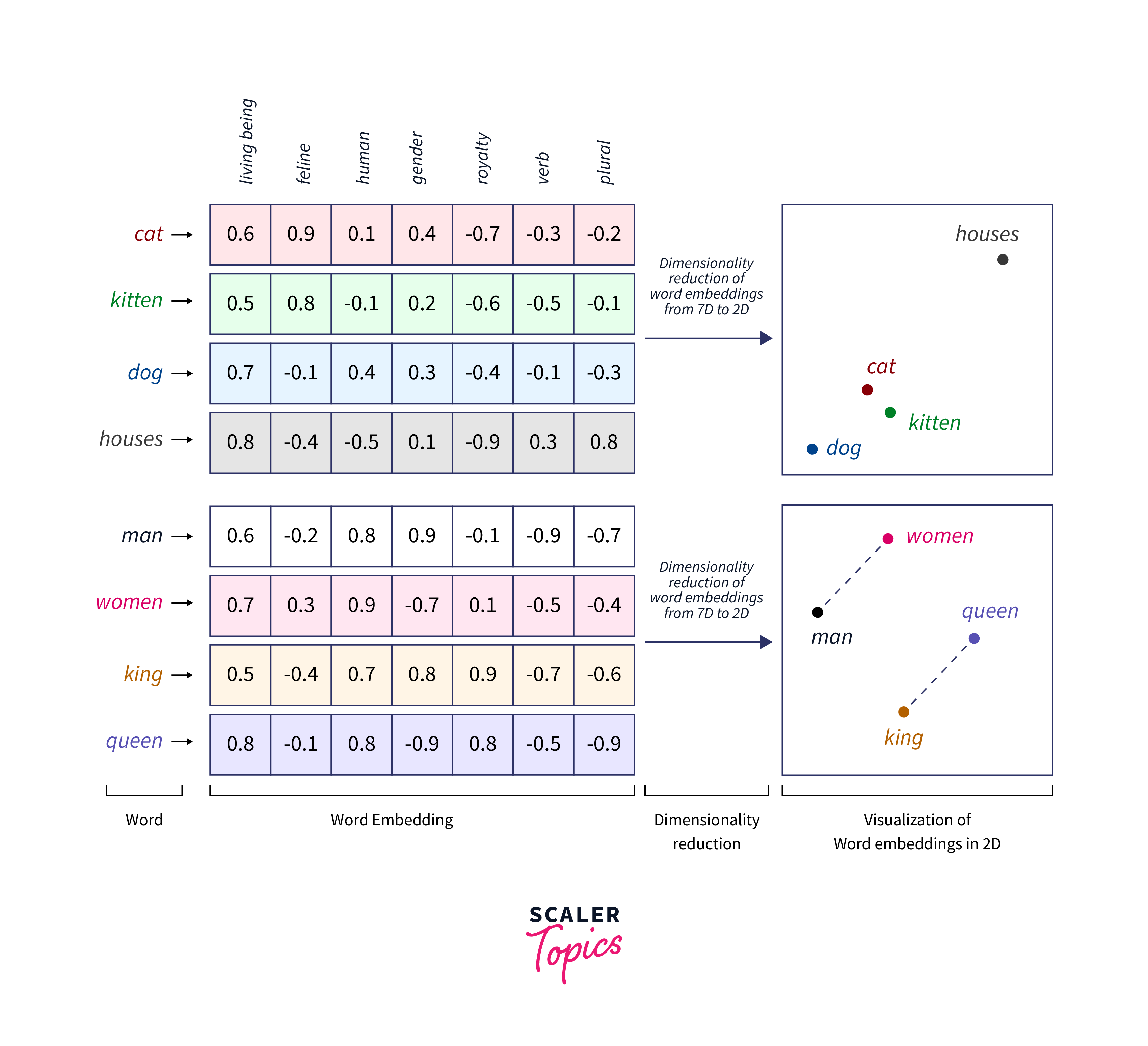

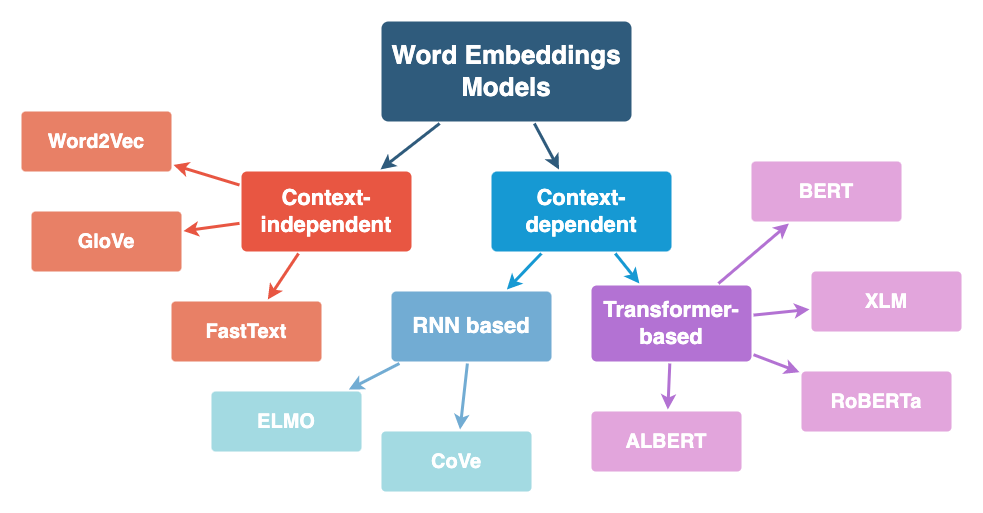

Word Embedding Ì´Ëž€ - Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. In nlp, it is almost always the case that your features are.

Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. In nlp, it is almost always the case that your features are. Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous.

In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your vocabulary.

Understanding Word Embeddings The Building Blocks of NLP and GPTs

Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings are dense vectors of real numbers, one per word in your.

Word Embeddings with TensorFlow Scaler Topics

Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. In nlp, it is almost always the case that your features are. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a.

1.12 Representing Texts as Vectors Word Embeddings — Practical NLP

Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings are dense vectors of real numbers, one per word in your.

Word Embeddings Engati

In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your.

Word Embedding Guide]

In nlp, it is almost always the case that your features are. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can.

word2vec 실습 Hooni

Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. In nlp, it is almost always the case that your features are. Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a.

1.12 Representing Texts as Vectors Word Embeddings — Practical NLP

In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a.

Explore the Role of Vector Embeddings in Generative AI

Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can.

Paper Explained 1 Convolutional Neural Network for Sentence

In nlp, it is almost always the case that your features are. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your.

Word Embedding using GloVe Feature Extraction NLP Python

Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your vocabulary. Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. In nlp, it is almost always the case that your features.

In Nlp, It Is Almost Always The Case That Your Features Are.

Word embeddings transform textual data, which machine learning algorithms can’t understand, into a numerical form they can comprehend. Word embeddings capture semantic relationships between words, allowing models to understand and represent words in a continuous. Word embeddings are dense vectors of real numbers, one per word in your vocabulary.

![Word Embedding Guide]](https://iq.opengenus.org/content/images/2021/09/word-embedding-header.png)